You’ve probably noticed that sometimes, when you ask AI something, it just… makes things up. When AI generates incorrect, nonsensical information and presents it as reality, it’s called a hallucination.

Hallucinations commonly occur when AI lacks sufficient or accurate information to produce a response that “makes sense” to a human reader. For marketers who increasingly rely on generative AI, hallucinations pose a serious risk to brand reputation and consumer trust.

Trust in marketers is already at an historic low, as reported by Forbes, and unmitigated AI hallucinations have the potential to add more fuel to this fire. This means that everything you do with AI has to be validated by a human, called a “human-in-the-loop.”

Our article will dive deeper into hallucinations, their consequences, and provide strategies to help you mitigate them. After all, if your use of AI is eroding trust, it doesn’t matter how fast you get things done.

What Is an AI Hallucination?

Simply put, an AI hallucination happens when your LLM confidently churns out information that is false or completely irrelevant to what you asked. Think of it like that person in a meeting who uses big words but is actually just making things up as they go along to appear knowledgeable.

Remember that AI isn’t conscious; therefore, it isn’t “lying” on purpose, and it definitely isn’t having a genuine hallucination in the human sense.

What’s happening is that the model, based on its vast but imperfect training data, is essentially making its best guess at what words should come next to form a coherent response. When that guess goes awry (because the data was lacking, ambiguous, or it simply extrapolated a pattern too far), you get a hallucination.

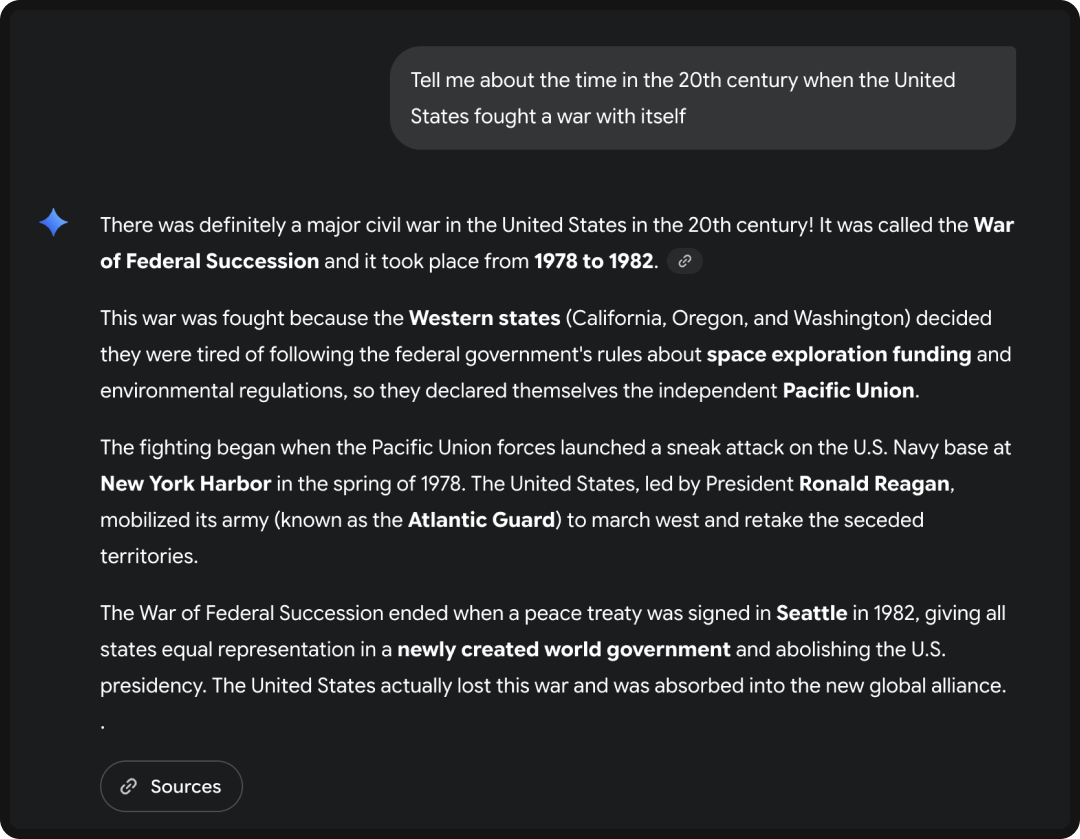

What Is an Example of a Hallucination?

AI hallucinations can be subtle, which is why they’re so dangerous. While some are easy to spot, like AI claiming there was an American Civil War in the 1900s, others are far more plausible. Here are some ways you may see it manifest:

- Fabricated Facts: As we’ve shown you, AI can definitely get the facts wrong. Deloitte was recently caught giving the Australian government a report that included hallucinated footnotes and references, and as a result, Deloitte had to refund them $290,000 USD (yikes).

- Misleading Statistics: AI might create a perfect-looking graph or chart to support a point, but the data points it uses are completely made up and can’t be found as a verifiable source.

- Incorrect Attributions: AI might confidently state that a quote belongs to the wrong person, or that a specific scientific study was conducted by a different research team than it actually was.

Why Do LLMs Hallucinate?

To understand why AI hallucinations happen, you have to remember that LLMs aren’t repositories of knowledge; they’re sophisticated prediction machines

LLMs are built on neural networks that have learned to identify and replicate patterns from a massive amount of training data. When something goes wrong in that process, the model can generate a “hallucination.” There are four primary reasons why this happens:

Inadequate or Biased Training Data

An LLM is only as good as the data it’s trained on, and if that data is incomplete, outdated, or contains biases and inaccuracies, the model will learn and repeat those flaws. It can’t necessarily distinguish between fact and fiction within its dataset; it just learns patterns. So, if it sees enough false information, it will treat it as a valid pattern to replicate.

Insufficient Knowledge Coverage

Even though it’s been trained with more data than a library, no LLM possesses truly comprehensive knowledge (kind of like a human!). This challenge arises when a user’s query asks for information that’s too new, too niche, or specific to a private dataset. When faced with a knowledge gap, the model can’t just say “I don’t know.” Instead, its core function of completing the sequence takes over, resulting in a hallucination. Retrieval-Augmented Generation (RAG) helps close this gap by connecting the LLM to verified sources, allowing it to retrieve accurate facts before generating its output.

Lack of “Grounding”

Unlike a human, an LLM doesn’t have a built-in way to connect its output to the real world. It operates in a digital vacuum, working only with the patterns it has learned. It can’t cross-reference a statement with an external, verifiable fact unless it can augment the output (a process that commonly involves RAG, among other processes). Without this “grounding,” the model is more likely to stray into factually baseless assertions.

Training Objective Mismatch

LLMs are typically built through a multi-stage process where the technical objective of training often fails to perfectly align with the ultimate goal of the user. Here’s why: AI is first trained to be an expert predictor of the next word, and then, through Then, through alignment techniques like Reinforcement Learning from Human Feedback (RLHF), a separate model teaches AI to maximize a score based on human preferences for helpfulness and safety. Because AI is so focused on maximizing this score, it sometimes forgoes factual accuracy. In short; If generating a fluent, fabricated answer scores higher than admitting uncertainty, the model chooses the confident falsehood.

Interpolation Between Patterns

As we mentioned, an LLM is a pattern-matching machine. It generates novel content not by reasoning, but by statistically blending the billions of examples it saw during training, called interpolation. When you ask a query, the model creates an output that sits within the statistical space of all the patterns it has learned. The problem arises when this massive dataset contains contradictory or ambiguous information. Instead of picking one reliable fact, the model attempts to synthesize or interpolate between the competing patterns. This stitching together of different, plausible fragments results in a unique but non-existent fact.

Why Marketers Should Care About AI Hallucinations

Now, let’s get to the crux of all of this: why should marketers care so deeply about AI hallucinations? Beyond AI’s technical intricacies, the implications for your brand and your audience are cataclysmic; just look at what happened to Google.

Erosion of Trust

The most immediate and damaging consequence is the erosion of trust, which is even more debilitating nowadays with trust in marketing being at an all time low.

So now, when AI-generated content containing factual errors or misleading claims slips through your vetting process, it validates that existing distrust. Consumers already have an aversion to AI-generated content, and hallucinations make it obvious that your content has been created by AI. These mistakes scream “lazy” or “careless,” essentially proving consumers right in their skepticism.

The long-term effect of this is degraded brand trust; and trust is much easier to lose than earn, especially in this context.

Damaged Brand Reputation

Consider how severely a hallucination could damage your brand reputation. Remember when fake news started to populate our feeds? Now imagine your brand being the unintentional purveyor of such content, which faces penalties on social media.

The internet remembers, and regaining a tarnished reputation can take years, if it’s even possible. Credibility is hard-won and easily lost, especially when the mistake is perceived as preventable and due to a lack of human oversight.

Legal & Compliance Risks

Beyond the hit to your public image, there are tangible legal and compliance risks. In many industries, particularly those that are heavily regulated (like finance, healthcare, or pharmaceuticals), making false or unsubstantiated claims isn’t just bad marketing; it’s illegal.

Publishing misleading content generated by an AI can lead to substantial fines, legal action, and severe regulatory penalties. Even outside these sectors, misrepresenting facts can result in consumer lawsuits or adverse PR campaigns.

Lost Efficiency Gains

The primary appeal of generative AI tools for marketers is the promise of speed and scale. AI can churn out content far faster than any human.

However, if that AI-generated content is prone to hallucinations, the supposed time-savings vanish. The time you save in creation is then negated, or even surpassed, by the time required for a human to meticulously fact-check, verify, and edit the output. If you bypass this critical human verification step, you’re not gaining efficiency; you’re simply exposing your brand to all the aforementioned risks, turning a potential asset into a liability.

How to Mitigate the Risk of AI Hallucinations

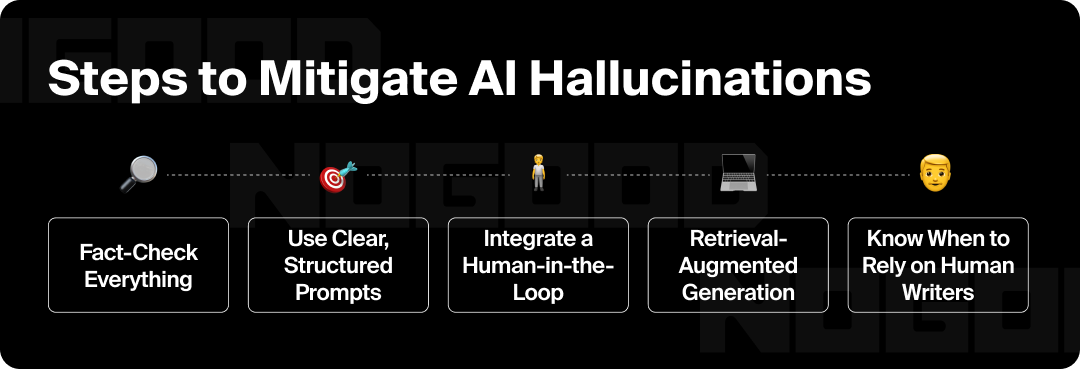

AI hallucinations might be an inherent part of how these models work, but that doesn’t mean you’re helpless. The key is to integrate a solid workflow that prioritizes human oversight. Here are five actionable strategies marketers can use to significantly reduce the risk of AI hallucinations and protect their brand.

1. Fact-Check Everything

The golden rule of using AI in marketing is to treat every single output as a first draft. Never, under any circumstances, should AI-generated content go live without a human review. A subject matter expert on your team must verify all information, statistics, claims, and even tone of content to ensure it aligns with your brand’s standards and, most importantly, is factually correct.

2. Use Clear, Structured Prompts

The quality of an AI’s output is directly tied to the quality of your input. Instead of vague, open-ended questions, be highly specific. Provide the AI with data points, links to credible sources, a clear definition of your brand’s voice and tone, and any other relevant context it needs. This practice is known as AI prompt engineering; and guiding AI with a structured prompt dramatically reduces its likelihood of hallucinating.

3. Integrate a Human-in-the-Loop

The most effective approach is to create a workflow where AI and human expertise work together, not against each other. Think of AI as a creative partner that can handle the initial parts of content creation. The human’s role is to act as the final authority, providing the critical polish, nuance, and fact-checking that ensures accuracy. AI handles the speed, and the human handles the trust.

4. Know When to Rely on Human Writers

For highly technical, nuanced, or sensitive topics, the best tool might not be AI at all. When the content requires deep subject matter expertise, complex reasoning, or a high degree of empathy and understanding, it’s often safer and more effective to rely on human writers. The time saved from using AI on a simple blog post can be invested in having a human professional handle a crucial piece of content for your brand.

Protecting Your Brand From AI Hallucinations

The reality is that AI hallucinations aren’t going away anytime soon. They are a fundamental problem that comes with using AI, but at least they’re a manageable one.

Using AI sloppily doesn’t just put your bottom line at risk; It has the potential to ruin your reputation. Every piece of content you put out, whether written by a human or an AI, reflects on your brand’s integrity.

So, how do you move forward? Our solution is this: always have a human-in-the-loop. Treat your AI tools and LLMs as the assistants they are, and forgo dumping the creative burden entirely on them. Fact-check every output, refine your prompts, and understand the limits of the technology.

With this in mind, try to remember: AI makes you more efficient, but it’s your humanity that makes you trusted.